Introducing S&P Global Marketplace generative AI search

S&P Global Marketplace now lets customers ask questions about its datasets in plain language — from basic coverage queries to detailed answers drawn from user guides — powered by Large Language Models (LLMs).

Authors: Drew Titus, Colleen Kimball, Jeremy Lopez, Sophia Zhi, David Ariyibi, Stu Harvey, Yulia Shchadilova, Lupe Carino

S&P Global is the world’s foremost provider of data. Advancements in technology have led to increased availability and diversity of data coupled with heightened demand for data from companies of all types.

S&P Global has capitalized on this evolution through the creation of the S&P Global Marketplace. Marketplace serves as the singular platform to discover and explore datasets and solutions from across all of S&P Global along with curated third-party providers. Marketplace also enables users to find the right data for their needs through robust descriptions, sample data, data dictionaries, interactive visualizations, supporting research and documentation, and more.

As S&P Global continues to innovate, launch new products, and integrate offerings following the merger with IHS Markit, Marketplace grows extensively. Since the platform is constantly growing, the Marketplace team continued to explore various options to improve and streamline the user discovery experience. During this time, Generative AI began to take more shape than it had before on a macro level. In partnership with Kensho, the Marketplace team arrived at the idea of evolving the current Marketplace search to include question-based search across the platform.

Since the initial launch of Marketplace, the Marketplace and Kensho teams have collaborated closely, with Kensho Link and Kensho Scribe being some of the first solutions available on Marketplace. This strong partnership, coupled with Kensho’s expertise, led to an enhanced natural language search functionality utilizing large language models (LLMs). The initial release of Marketplace Generative AI search enables users to ask an array of questions — from simple data questions, such as a dataset’s geographic coverage or history, to more complex questions where answers are pulled from dataset user guides. You can ask questions about the datasets and solutions on Marketplace by going to any tile on the platform and asking a question in the new on-tile search bar.

Traditional search engines

A traditional lexical search engine breaks down a users’ query into individual text components and returns search results based on how well those words exactly match documents stored in a search engine’s index. The relevance of those documents is then scored using a measure like term frequency/inverse document frequency (TF/IDF), which stipulates that documents in which a search term appears more frequently are more relevant while diminishing the importance of words that commonly appear in all documents in the index.

The advantages of lexical search are that it is a fast and efficient way of finding exact matches in a large database and useful for finding specific names and other information that is not likely to change. A number of existing solutions already exist, such as Elasticsearch and SOLR, both based on the Apache Lucene engine. These solutions include rich search functionality along with various options for data storage and retrieval.

However, there are some opportunities for improvement as well. Even when there is a lot of great data and documentation available, sometimes it can still be difficult for a user to find what they are looking for. What if a user doesn’t use the right terms which are found in the underlying documents? Assuming the user does use the right terms, what if there is better documentation for their use case but they didn’t find it because they were limited by traditional search functionality? Also, if the dataset has a lot of domain-specific jargon, it may not be trivial for a non-expert user to search effectively. All that said, a lot of good content could be hard to discover and could be underutilized in a traditional search experience. Finally, what if a user simply wants an answer rather than a link to a document which they then have to read through to determine if it met their search needs. A question answering system might be difficult to build as another layer on top of a search engine.

It turns out that for many applications, the latter question might not be so difficult to solve now, thanks to Large Language Models (LLMs) enhanced by S&P Global’s own data from Marketplace.

LLMs

If you’ve been paying attention to the latest tech and AI trends over the past 18 months, you’ve likely heard about large language models (LLMs), including ChatGPT, and some of their applications. Large language models are AI models that are trained on huge corpora of text and can typically perform well on a variety of tasks, such as question answering, information retrieval, and, in some cases, even logical and mathematical reasoning. The training datasets are so large that the models are often found to perform fairly well even on novel tasks that are prompted by the user. This generalization to work well on a variety of tasks causes large language models to potentially be much more useful tools than earlier generations of language models. A project such as building advanced search functionality for the S&P Global Marketplace is ideal for the deployment of LLMs.

To perform question answering, we can start with a question and a set of associated snippets of text. With a carefully constructed prompt that includes that information as well as any other relevant information we might know about, a good LLM can find the answer to the question within the provided text.

Retrieval-augmented generation

Although there are many things that state-of-the-art LLMs are good at, they do have limitations. As their name suggests, their original purpose was to model natural language and not to act as an information storage system. If you ask a typical LLM about something you said a few seconds ago, it won’t be able to answer because that wouldn’t be part of its training data. You could try retraining to incorporate new data, but training can be a costly and time-consuming process. And in some applications, you would need to incorporate new data within seconds.

Instead of training new models every time new information needs to be analyzed, what the AI field has developed are techniques to feed the relevant information to the LLM along with the user’s input. The model doesn’t need to memorize everything to be able to return it when prompted; it just needs to be able to read the information and figure out how or if it’s relevant to the user.

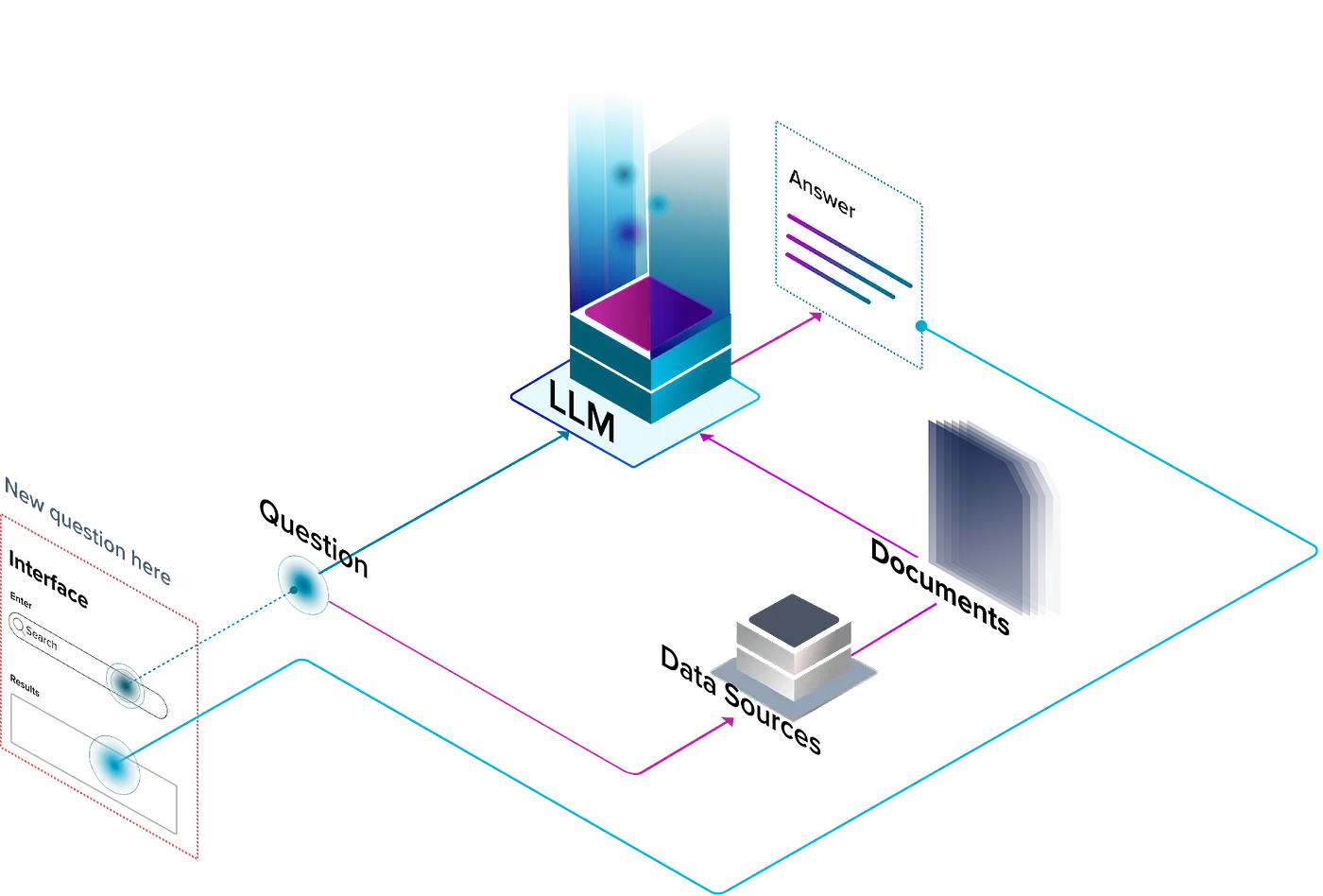

One of the most popular paradigms for injecting novel information into an LLM is retrieval-augmented generation (RAG) — augmenting the quality of the LLM-generated response by retrieving relevant information first. In RAG systems, tools are provided to allow for data retrieval from different sources. Depending on the application, these tools could be things like corpora of text documents to search through, databases of tabular data, chat history for chatbots, and, in appropriate cases, even web search engines.

An example of how a simple RAG system might be designed

Back to Marketplace search

With this information, we can return to our initial opportunity of powering a more sophisticated exploration and discovery experience on the S&P Global Marketplace.

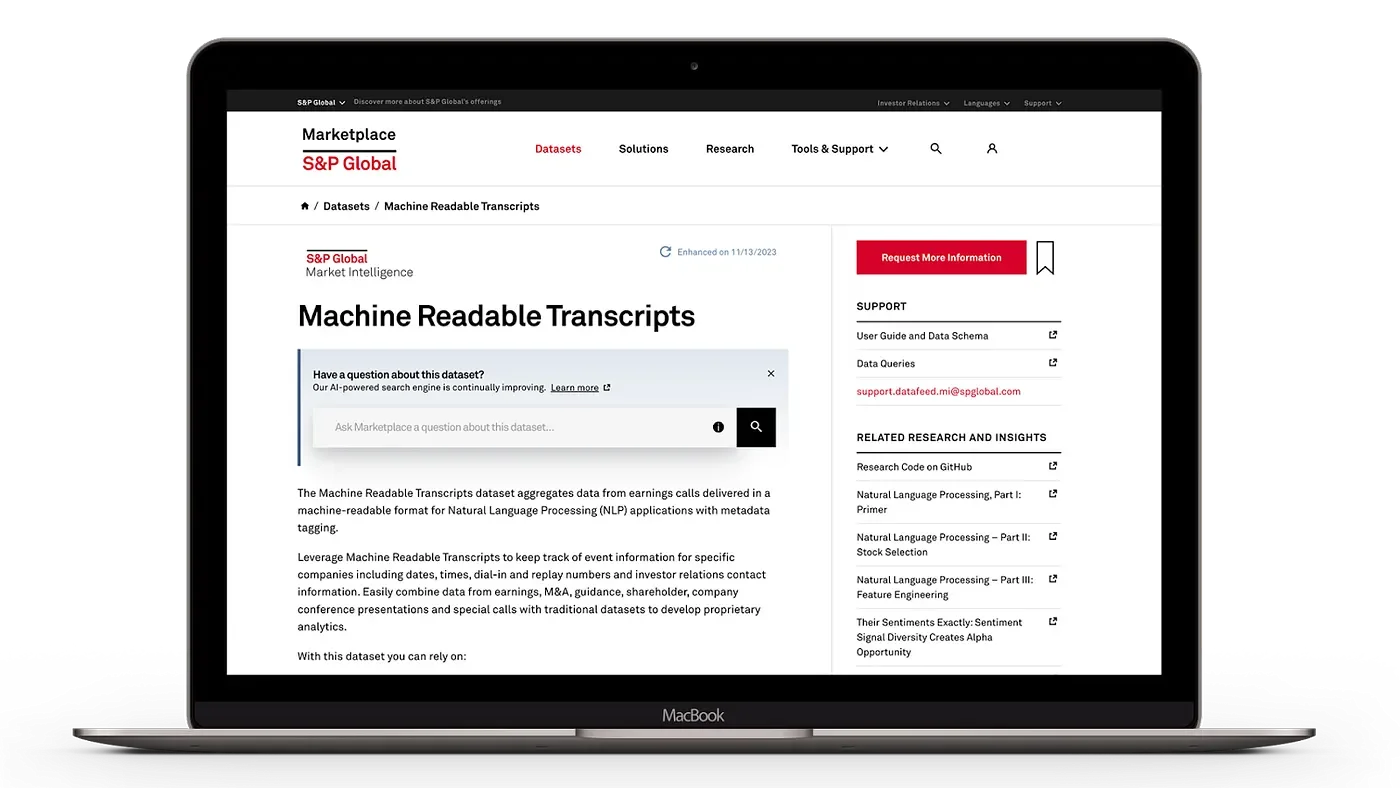

A tile on the S&P Global Marketplace

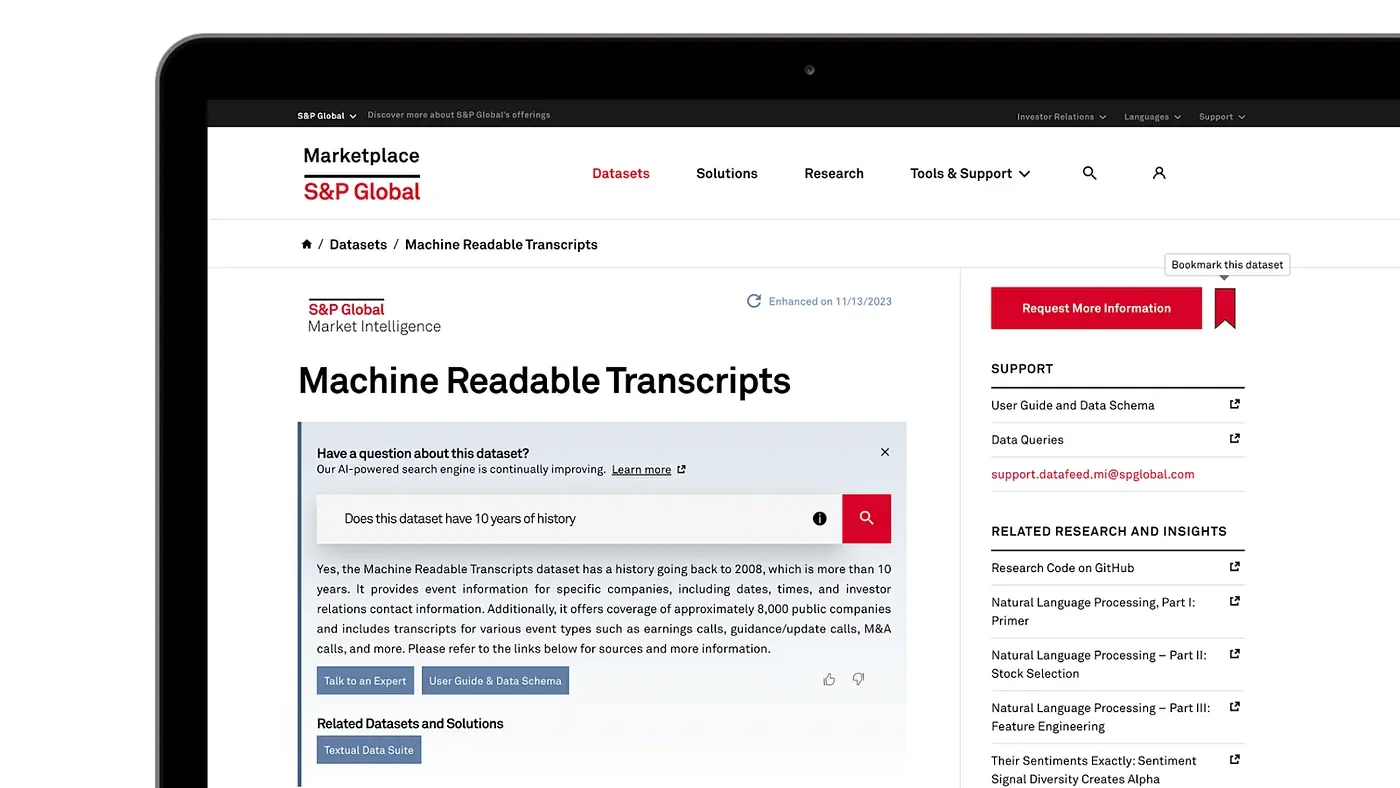

While LLMs can provide an improved experience, there is general awareness of hallucinations and other potential pitfalls. That’s why it’s important that Marketplace doesn’t just provide the answer, but also a click-through to the source documentation.

Results from Generative AI search on a tile

The enhanced Marketplace search has been a collaborative effort between Kensho and the Marketplace team, taking advantage of Kensho’s capabilities in engineering and machine learning to improve the user experience of an existing product. With so much research being done throughout the tech industry to use LLMs in applications, such as search, we can expect search products to continue to improve as new advances and enhancements are introduced.

Excited to try? Check out the AI-powered search engine on any product or dataset page on https://www.marketplace.spglobal.com.